Proceedings

Featured Presentations

How Siemens Energy Enables the Global Energy Transition

What’s New in MATLAB and Simulink R2023a

Dr. Jason Ghidella, MathWorks

Project-Based Learning and Design with Simulation

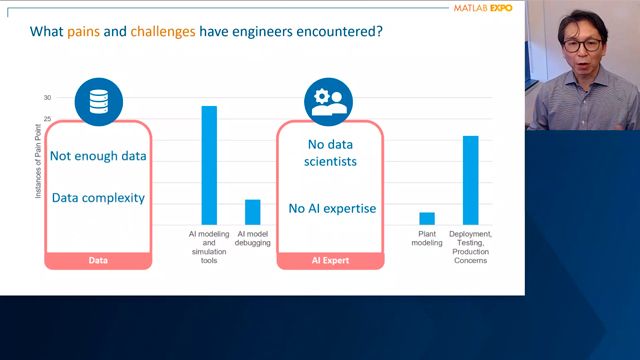

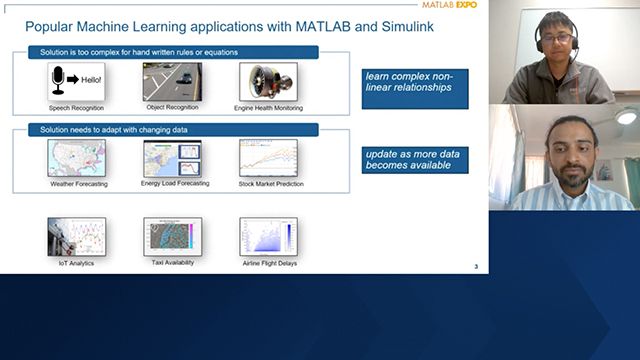

AI

ANA's Predictive Maintenance Challenge: Replace Aircraft Parts Before They Break

Naoya Kaido, All Nippon Airways Co., Ltd.

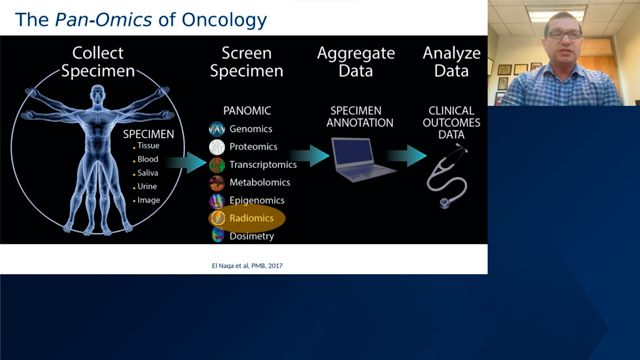

Machine Learning for Cancer Research and Discovery

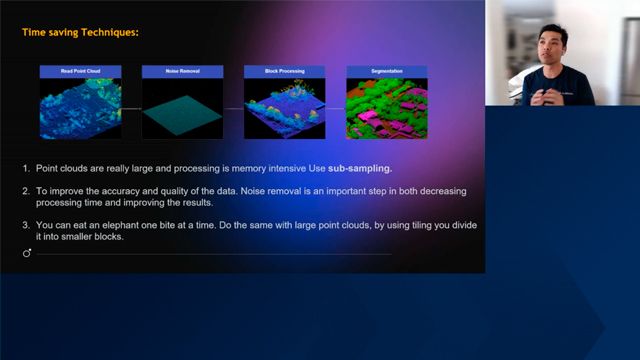

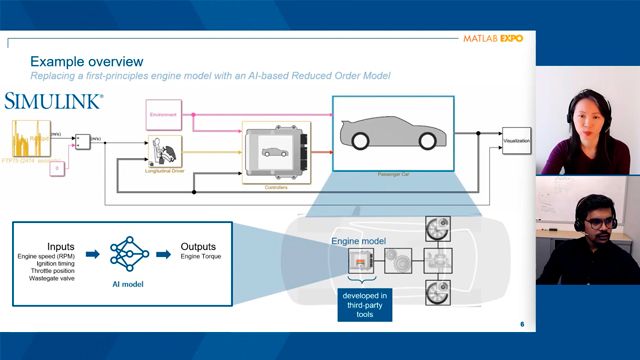

Reduced Order Modeling with AI: Accelerating Simulink Analysis and Design

Terri Xiao, MathWorks

Algorithm Development and Data Analysis

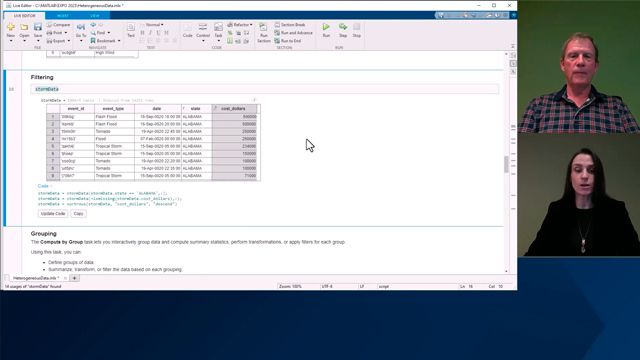

Cell Array, Table, Timetable, Struct, or Dictionary? Choosing a Container Type

David Garrison, MathWorks

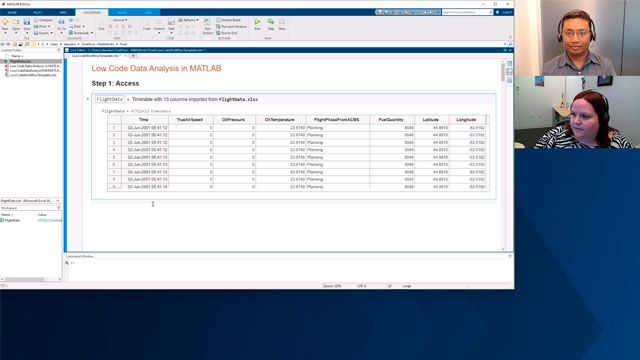

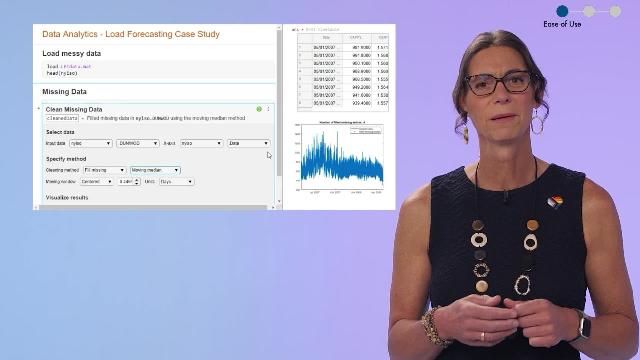

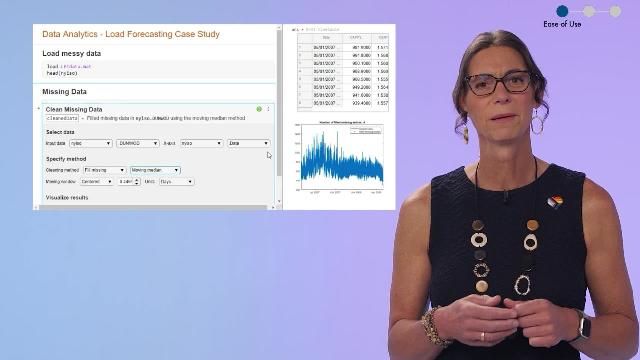

Low Code Data Analysis in MATLAB

Lola Davidson, MathWorks

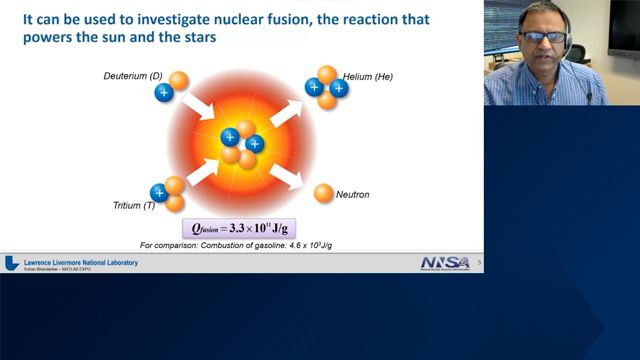

MATLAB for Control of Cryogenic DT Fuel for Nuclear Fusion Ignition Experiments

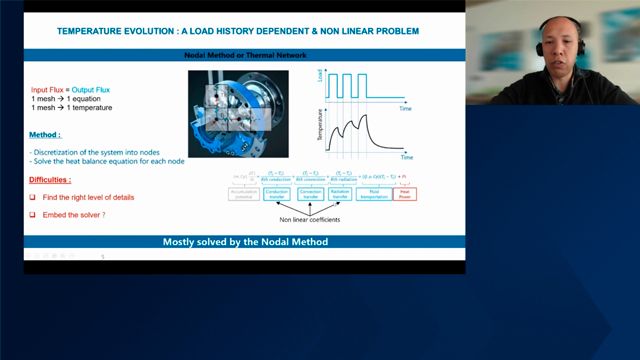

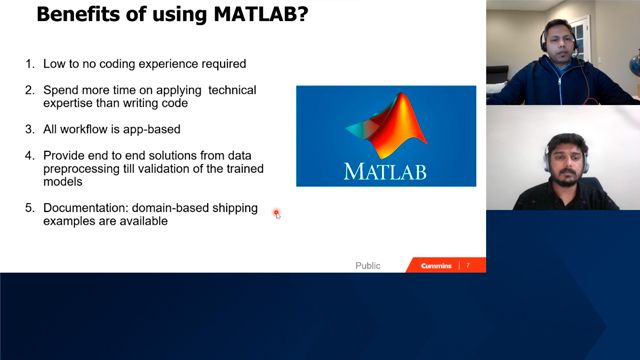

Process of Building AI Models for Predicting Engine Performance and Emissions

Shakti Saurabh, Cummins

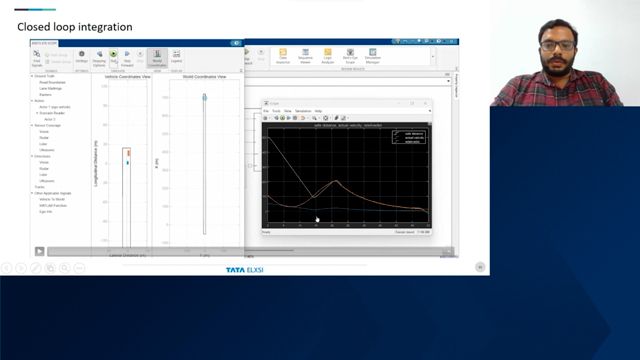

Autonomous Systems and Robotics

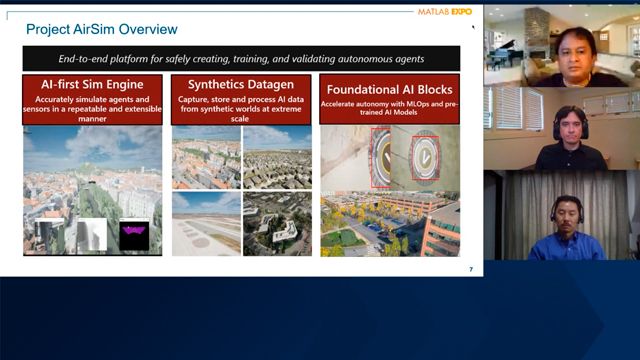

Accelerate Aerial Autonomy with Simulink and Microsoft Project AirSim

Balinder Malhi, Microsoft

Fred Noto, MathWorks

Applying AI to Enable Autonomy in Robotics Using MATLAB

Tohru Kikawada, MathWorks

Bringing the iCub Humanoid Towards Real-World Applications

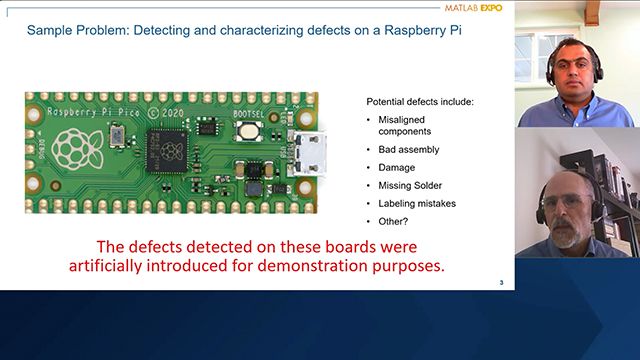

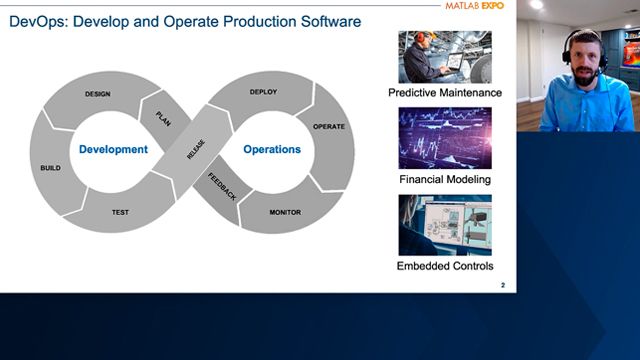

Cloud, Enterprise, and DevOps

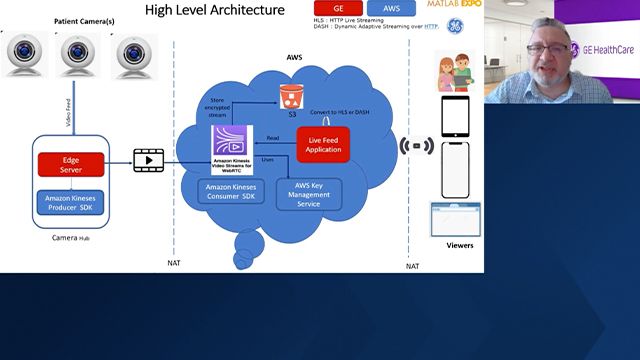

A Cloud-Based MATLAB Visual Inspection System

Arvind Hosagrahara, MathWorks

DevOps with MATLAB: A Predictive Maintenance System for Streaming Data

Nicole Bonfatti, MathWorks

Christine Bolliger, MathWorks

Running MATLAB Machine Learning Jobs in Amazon SageMaker

Gandharv Kashinath, MathWorks

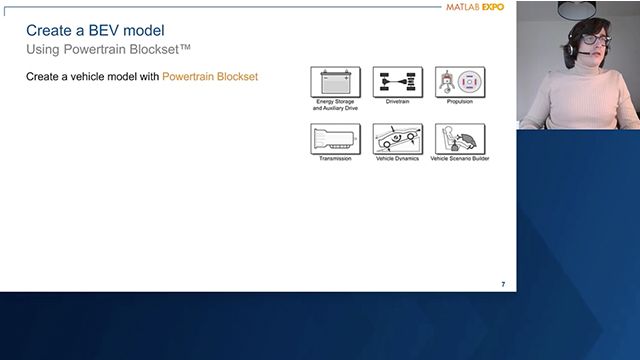

Electrification

Optimize EV Battery Performance Using Simulation

Dr. Danielle Chu, MathWorks

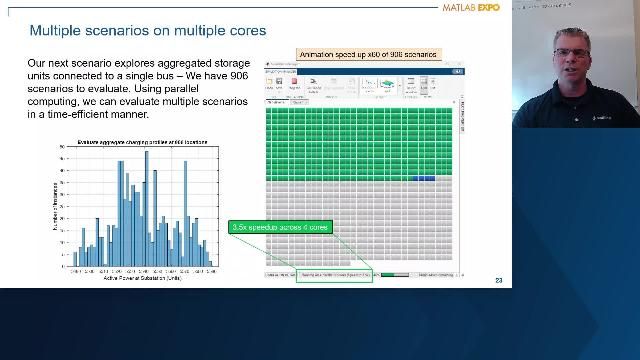

Techno-Economic Analysis of the Impact of EV Charging on the Power Grid

Chris Lee, MathWorks

Ruth-Anne Marchant, MathWorks

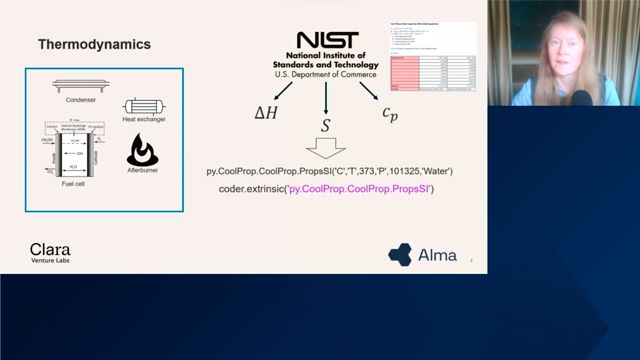

Toward Zero-Emission Shipping with Fuel Cells and Model-Based Design

Inclusive Innovation: Technology by and for All

APAC EMEA Inclusive Innovation: Technology by and for All

Daniela Dobreva-Nielsen, AZO Anwendungszentrum GmbH Oberpfaffenhofen

Mohan Sundaram, ARTILAB Foundation

Eva Pelster, Moderator, MathWorks

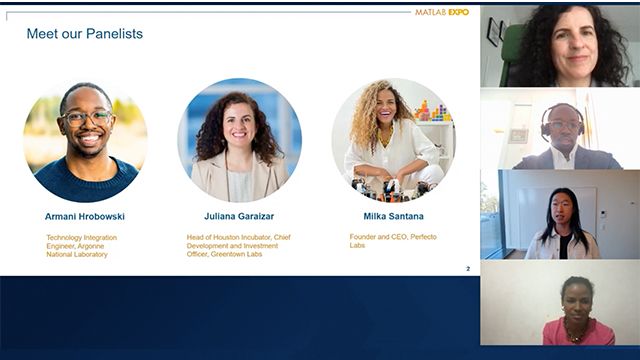

AMER Inclusive Innovation: Technology by and for All

Milka Santana, Perfecto Labs

Armani Hrobowski, Argonne National Laboratory

Ji Yang Luo, Moderator, MathWorks

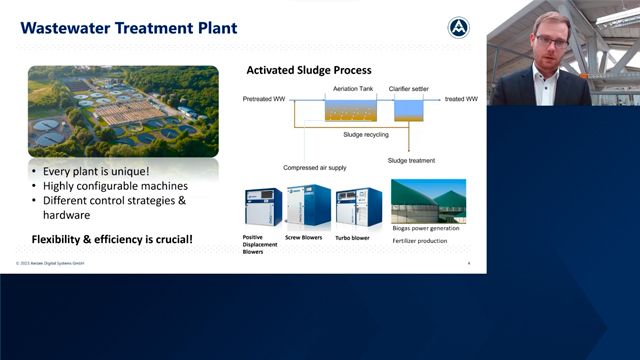

Modeling, Simulation, and Implementation

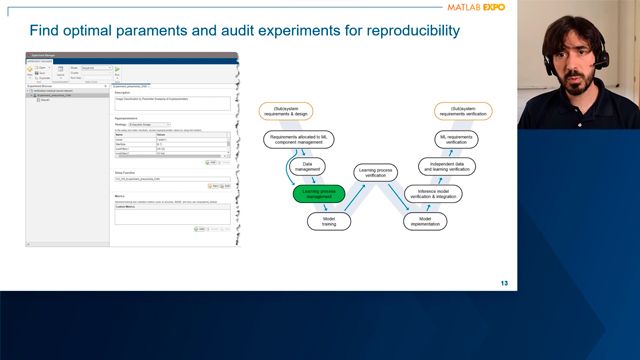

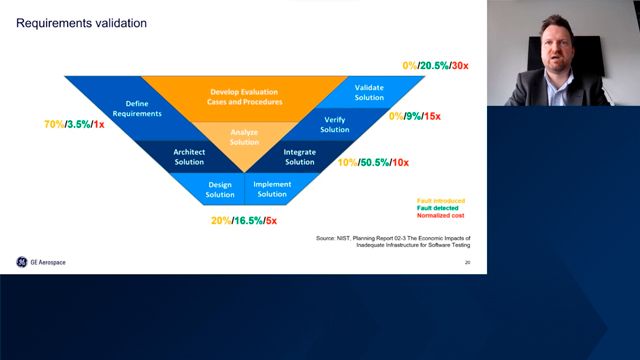

Formalizing Requirements and Generating Requirements-Based Test Cases

Dallas Perkins, MathWorks

Yann Debray, MathWorks

Plenary

How Siemens Energy Enables the Global Energy Transition

What’s New in MATLAB and Simulink R2023a

Dr. Jason Ghidella, MathWorks

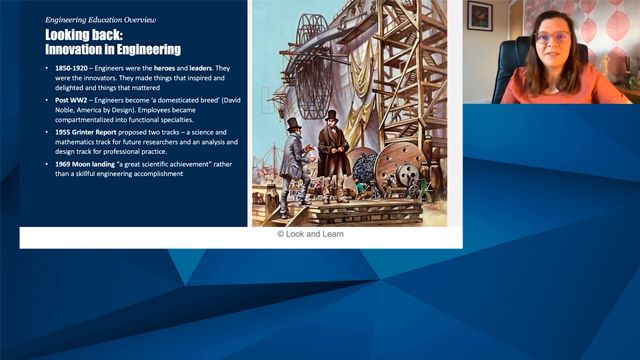

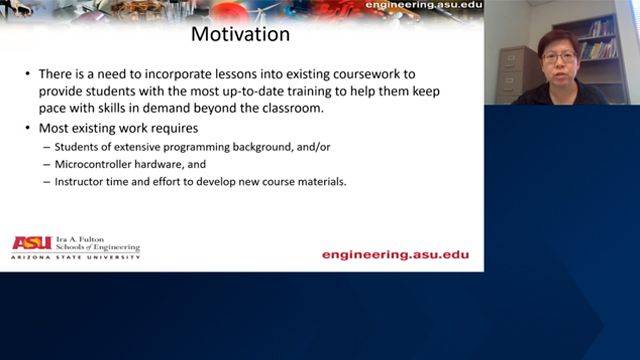

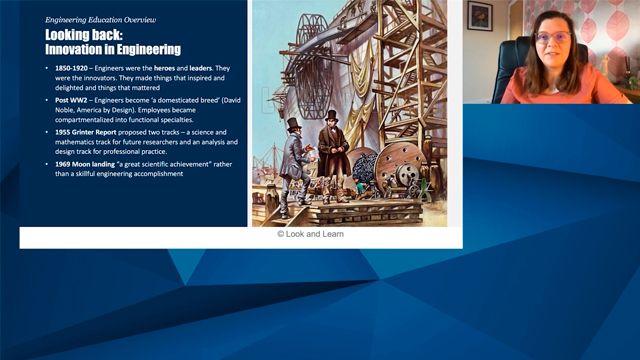

Project-Based Learning and Design with Simulation

Preparing Future Engineers and Scientists

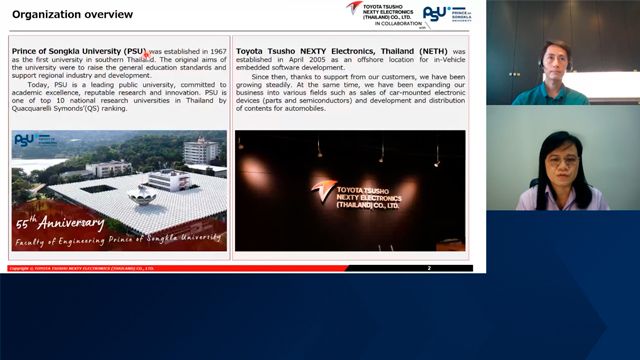

NETH and University Collaboration for Talent Workforce Development

Nattha Jindapetch, Prince of Songkla University (PSU)

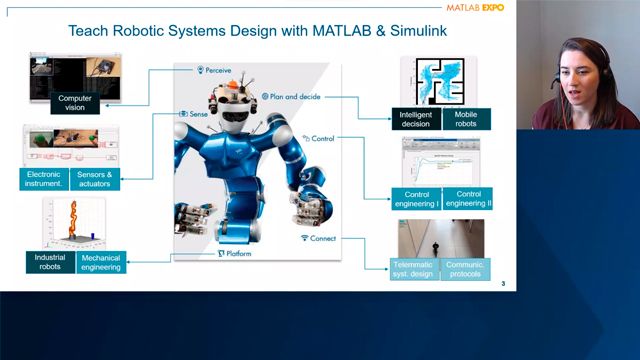

Teaching Robotics and Controls Made Easier

Melda Ulusoy, MathWorks

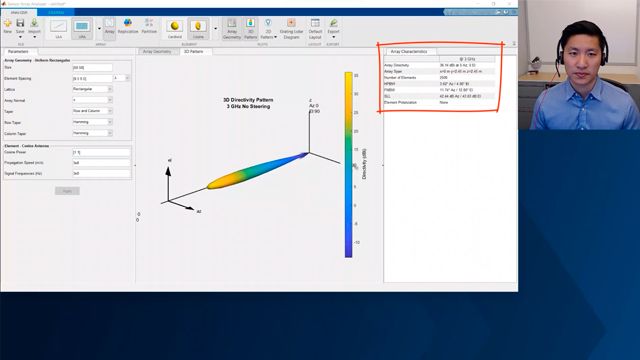

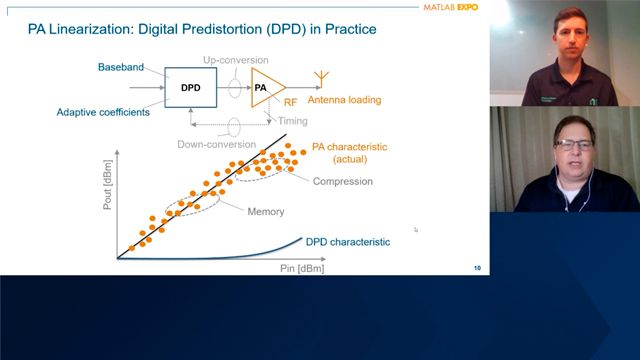

Wireless Connectivity and Radar

6G Wireless Technology: Accelerate Your R&D with MATLAB

Dr. Houman Zarrinkoub, MathWorks

Transforming Wireless System Design with MATLAB and NI

Jeremy Twaits, NI