Proceedings

Featured Presentations

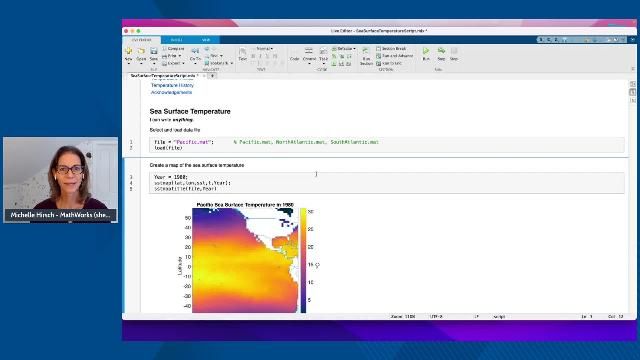

What’s New in MATLAB and Simulink R2022a

Michael Carone, MathWorks

Advancing AI and Data Science Through Industry/Academia Collaboration

Plenary

What’s New in MATLAB and Simulink R2022a

Michael Carone, MathWorks

Rolls-Royce Pathway to Net Zero

Advancing AI and Data Science Through Industry/Academia Collaboration

The Electronic System Architecture Modeling (eSAM) Method

How Is Shell Driving Its AI Future?

Amjad Chaudry, Shell International Ltd.

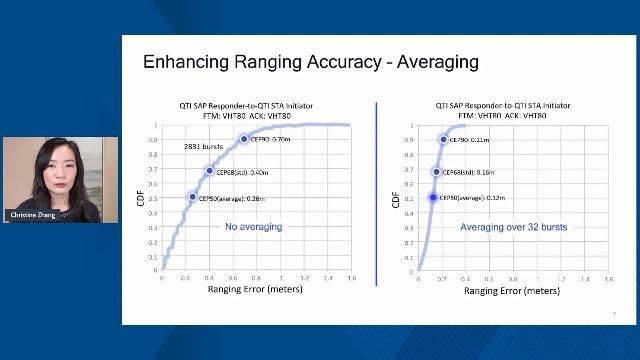

5G, Wireless, and Radar

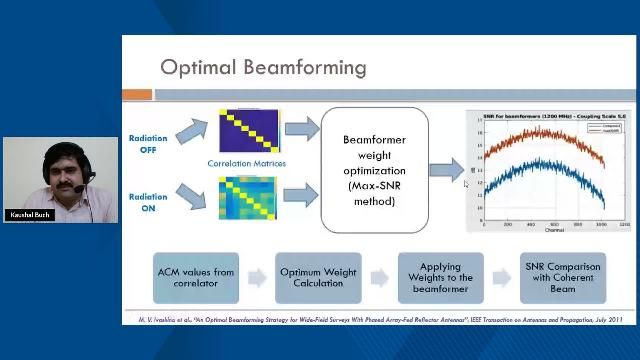

System-level Simulation of an Aperture Array Beamformer

Sreekar Sai Ranganathan, Indian Institute of Technology Madras

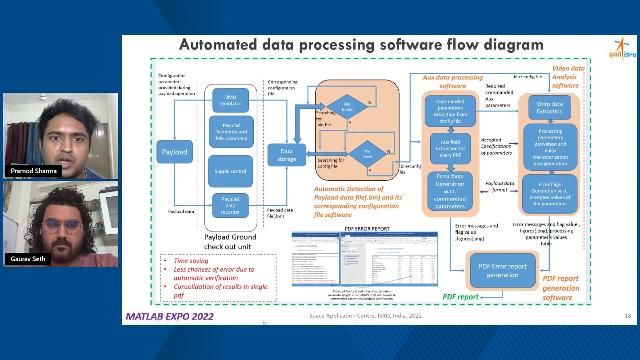

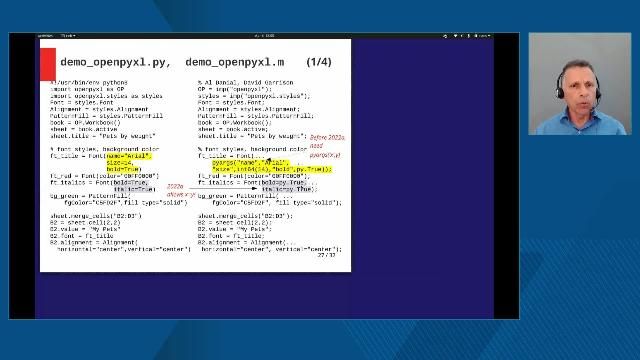

System Design and Automated Error Report Generation Data Processing Software Development

Pramod Sharma, Space Applications Centre, ISRO

5G Vulnerability Analysis with Reinforcement Learning Toolbox

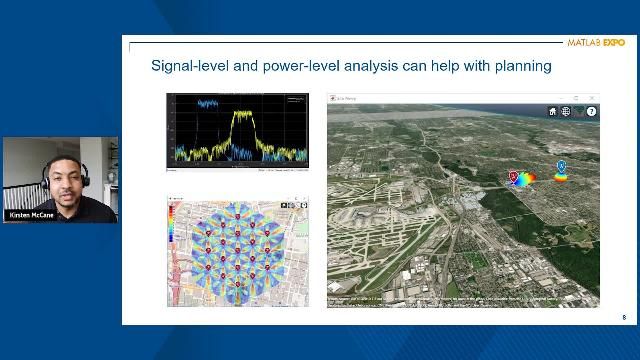

Modeling Radar and Wireless Coexistence

Giorgia Zucchelli, MathWorks

Kirsten McCane, MathWorks

Wireless Standards and AI: Enabling Future Wireless Connectivity

John Wang, MathWorks

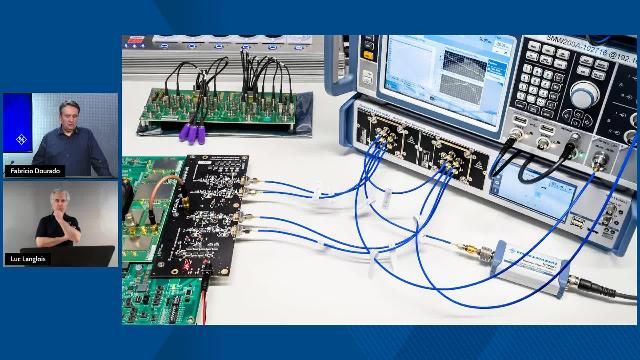

Secure, Automated, Internet-Based mmWave Test and Measurement with Xilinx RFSoC

Fabrício Dourado, Rohde & Schwarz

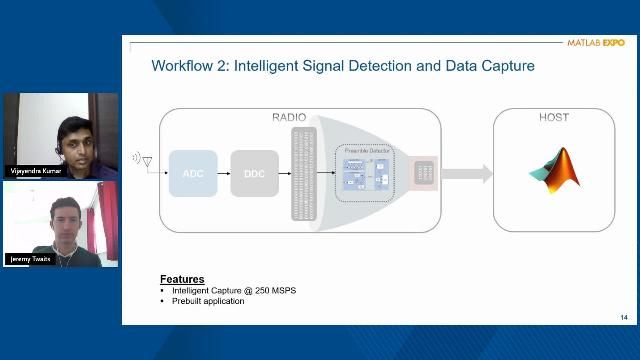

Connecting MATLAB to USRP for Wireless System Design

Jeremy Twaits, NI

AI in Engineering

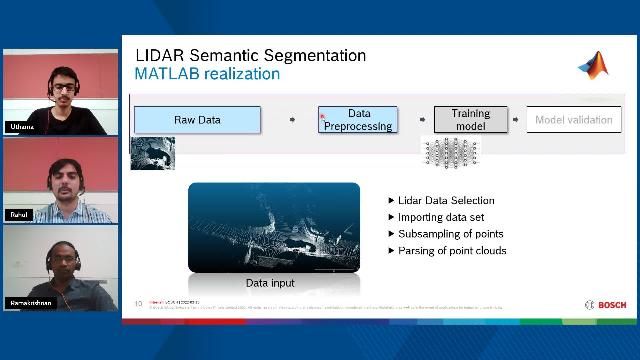

Designing a Lidar Sensor Classifier Using a MATLAB Framework

Porwal Rahul Vikram, Bosch Global Software Technologies Private Limited

Kadengodlu Uthama, Bosch Global Software Technologies Private Limited

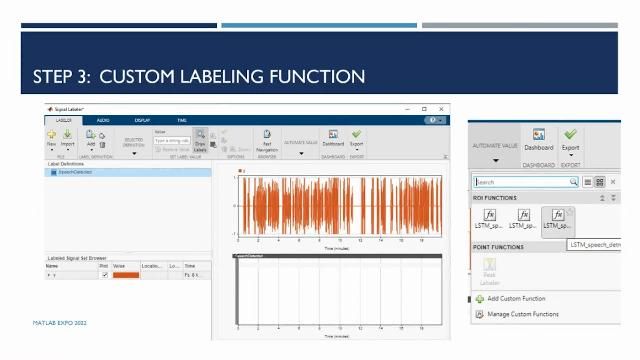

Automating an Audio Labeling Workflow with Deep Learning for Voice Activity Detection

Vasantha Paulraj, Honeywell

MATLAB with TensorFlow and PyTorch for Deep Learning

Yann Debray, MathWorks

Sivylla Paraskevopoulou, MathWorks

Fitting AI Models for Embedded Deployment

Emelie Andersson, MathWorks

Machine Learning with Simulink and NVIDIA Jetson

Bill Chou, MathWorks

Algorithm Development and Data Analysis

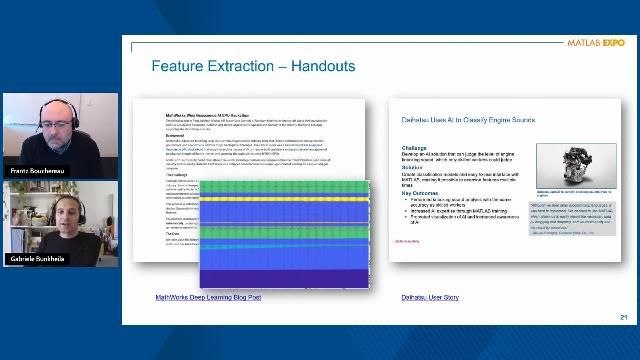

Data-Centric AI for Signal Processing Applications

Frantz Bouchereau, MathWorks

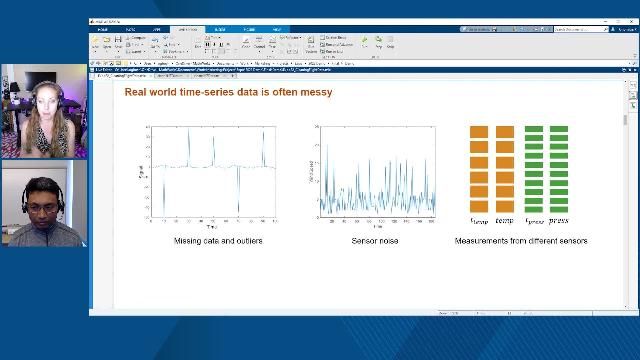

Cleaning and Preparing Time Series Data

Heather Gorr, MathWorks

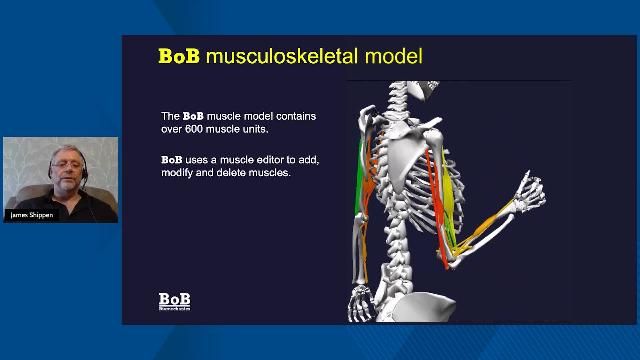

Biomechanical Analysis and Visualization

Autonomous Systems and Robotics

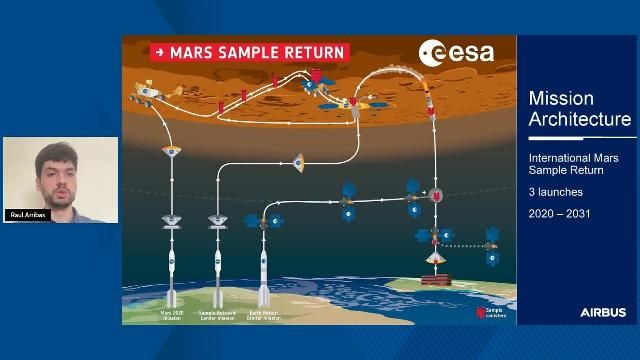

Mars Sample Fetch Rover: Autonomous, Robotic Sample Fetching

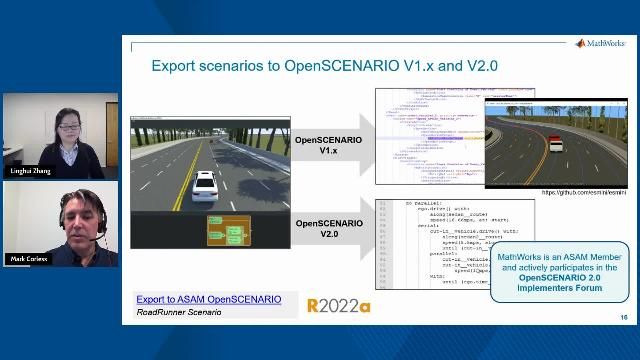

Design and Simulate Scenarios for Automated Driving Applications

Linghui Zhang, MathWorks

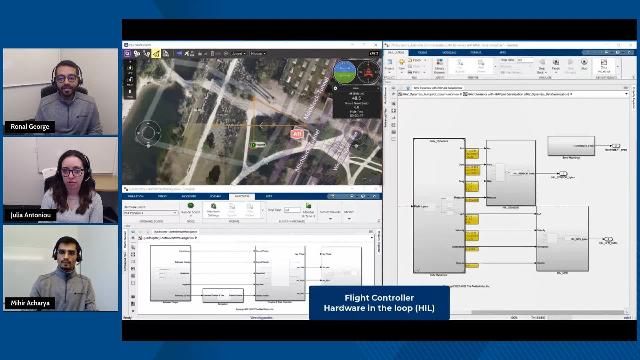

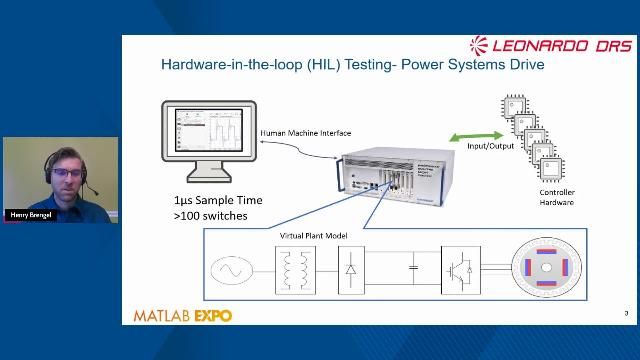

Simulate and Deploy UAV Applications with SIL and HIL Workflows

Ronal George, MathWorks

Julia Antoniou, MathWorks

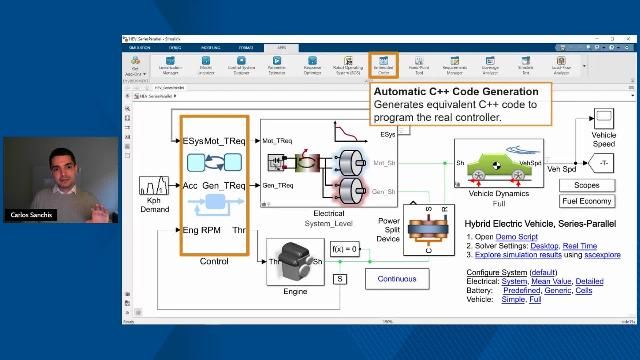

Electrification, Motor Control, and Power Systems

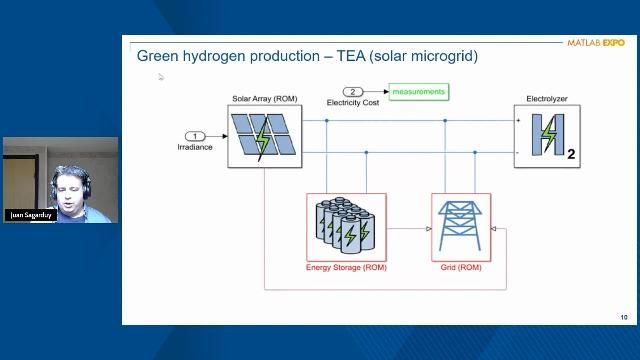

Enabling the Green Hydrogen Supply Chain with MATLAB and Simulink

Vasco Lenzi, MathWorks

Maria Fernandez, MathWorks

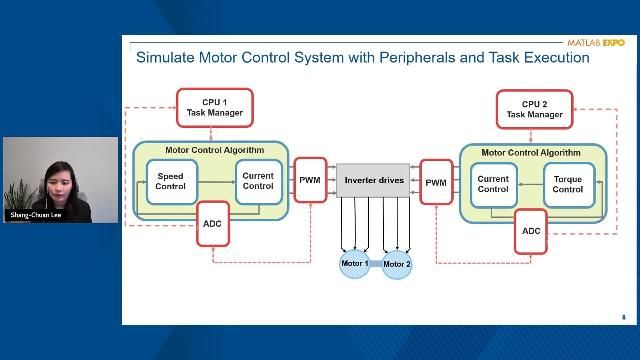

Deploying Motor Control Algorithms to a TI C2000 Dual-Core Microcontroller

Peeyush Kumar, MathWorks

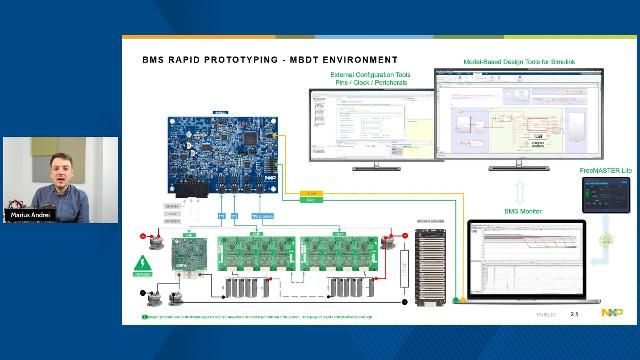

Rapid Prototyping of Embedded Designs Using NXP Model-Based Design Toolbox

Marius Lucian Andrei, NXP Semiconductors

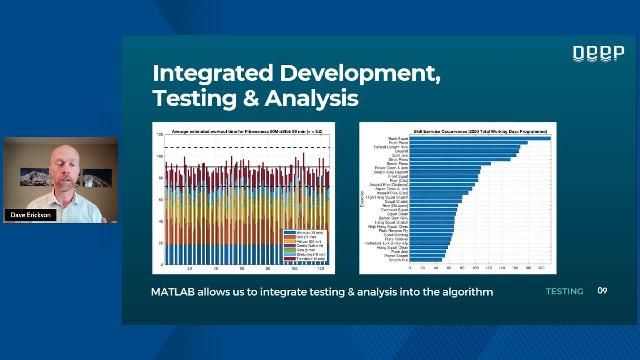

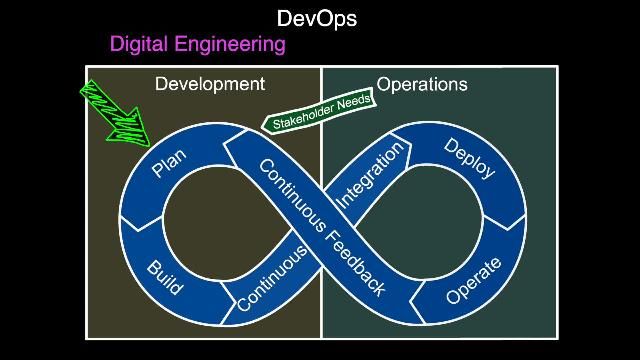

Implementation and DevOps

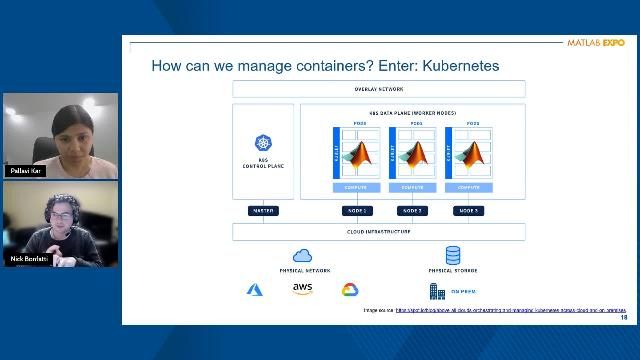

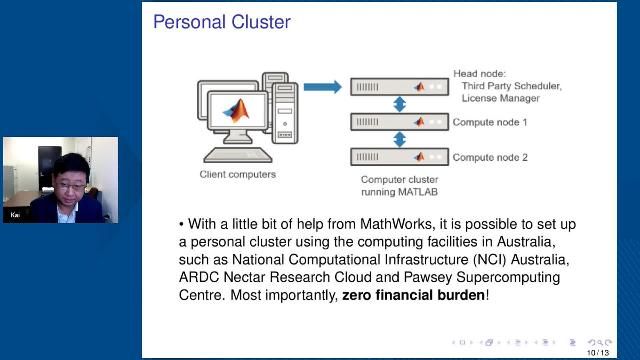

Deploying Cloud-Native MATLAB Algorithms in Kubernetes

Pallavi Kar, MathWorks

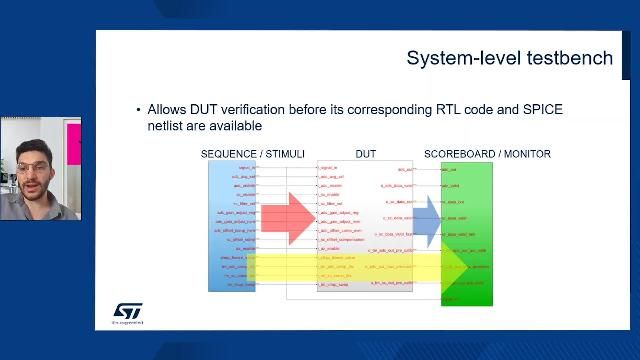

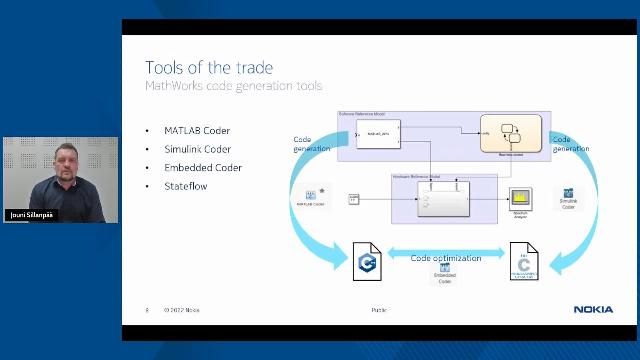

Reuse of Simulink Components Within Chip-Level Design and Verification Environments

Diego Alagna, STMicroelectronics

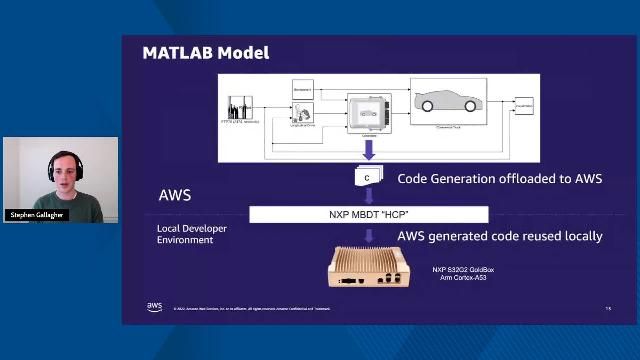

Automotive DevOps for Model-Based Design with AWS

Haydn Peterswald, Amazon Web Services (AWS)

Modeling and Simulation

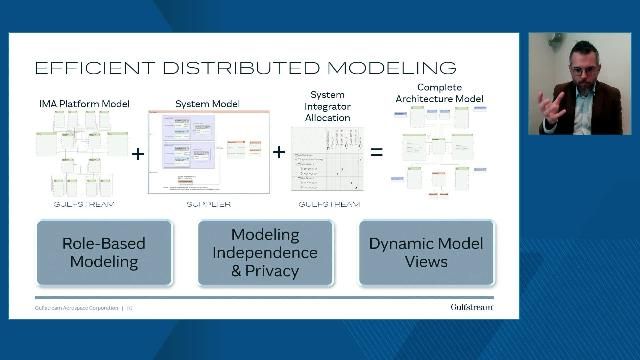

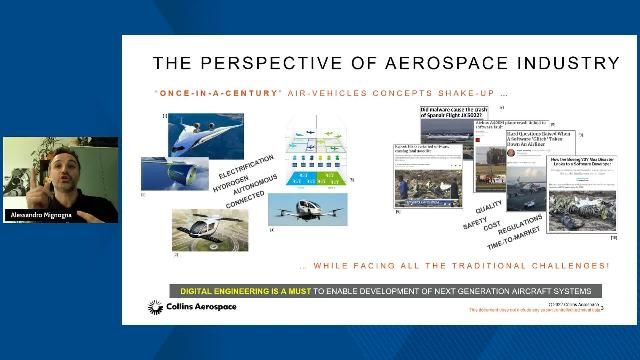

Models Exchange and Virtual Integration with MATLAB and Simulink

Alessandro Mignogna, Collins Aerospace Applied Research and Technology

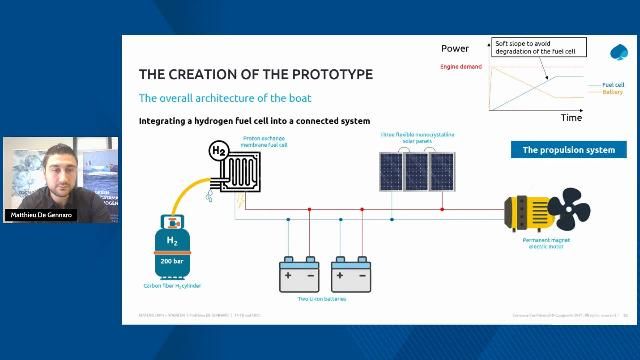

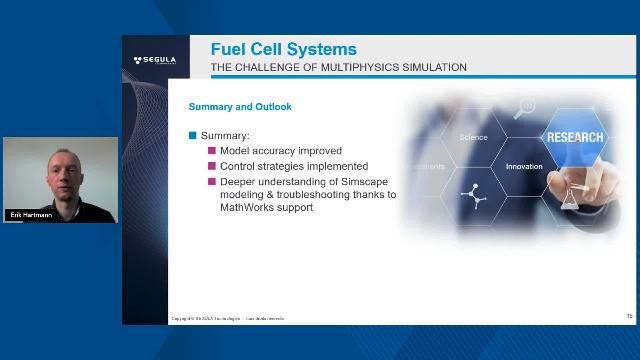

Fuel Cell Systems: A Challenge of Multiphysical Simulations

Using AI to Estimate Battery State of Charge (SoC)

Javier Gazzarri, MathWorks

Preparing Future Engineers

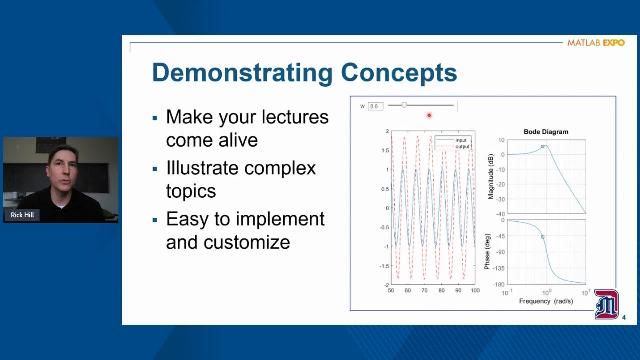

Digital Transformation in Education: Lightning Round

Dr. Rick Hill, University of Detroit Mercy

Dr. Rajakarunakaran S., Ramco Institute of Technology

Dr. Kristopher Ray S. Pamintuan, Mapúa University

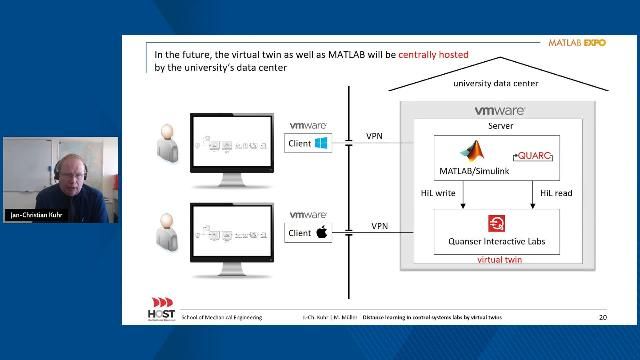

Using Virtual Twins for Distance Learning in Control Systems Labs

Martina Müller, Hochschule Stralsund – University of Applied Sciences

Electrification, AI, and the Future of Engineering Education

Sumit Tandon, MathWorks

Preparing Engineers for the Growing AI Workforce

Maria Elena Gavilán Alfonso, MathWorks

Systems Engineering

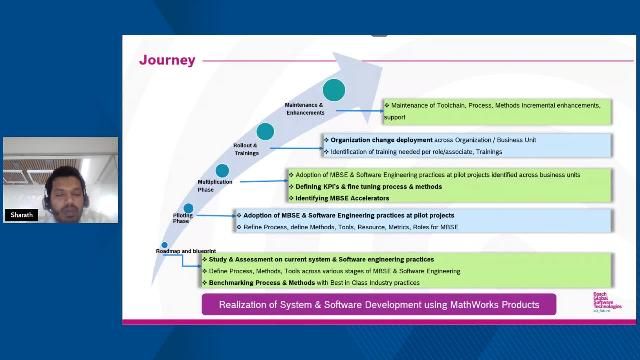

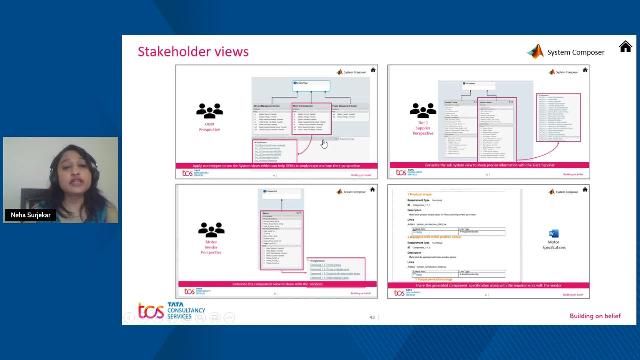

Neha Surjekar, Tata Consultancy Services

Why Models Are Essential to Digital Engineering

Brian Douglas, MathWorks

Women in Tech

Liz Ashforth, MathWorks

Dr. Angelita Howard, Morehouse School of Medicine

Dr. Yang Yan, Carrier Global Corporation

Tharikaa Ramesh Kumar, Auburn University

Tanya Morton, MathWorks

Claire Lucas, King’s College London

Angelique Janse van Rensburg, BMW

Dr. Babita Jajodia, Indian Institute of Information Technology Guwahati

Dr. Bama Muthuramalingam, QualComm

Mrs. Vaidehi Soman, CIPLA

Ms. Paduka Kannan, TATA Consultancy Services

Dr. Brooke Odle, Hope College

Dr. Feruza Amirkulova, San José State University

Milka Santana, Perfecto Labs

Lael Wentland, Oregon State University